AI AND ROLE PLAYING

If I was asked to play the role of a rocket scientist and give a talk to children, I’d probably put on a white lab coat, collect some pictures of rockets and space, and then learn some jargon to make some impressive sounding points, such as ‘Every day I do some telemetry and I often have to do a perigee raise manoeuvre, which is really exciting! Especially if I’ve reached burnout velocity in my ACS! But my favourite is when I do an RTLS!’ However, this would only impress the children (who are unskilled and unaware), any rocket scientists listening would be making faces like this 🤦🏻♀️ 🤦🏻♂️.

Similarly, as I commented in my previous post, AI can only pretend to be an IELTS expert. If you ask it to produce a reading task, it will produce one much faster than I can type. However, a closer inspection shows that the texts and items generated are strings of words associated with a topic. The addition of jargon connected to test writing leads to very convincing claims about ‘construct demand, distractor plausibility, inference load, CEFR alignment, item discrimination, and reliability’. In this post, I’ll take a closer look at the test materials ChatGPT produces, but first, let’s unpack some important jargon (or skip ahead if you aren’t interested in this).

DIFFICULTY, CEFR, DISCRIMINATION, AND RELIABILITY

When you create a test, it’s important to have a clear idea of the level (its difficulty). The CEFR (A1 to C2) is often used as a way to refer to the different levels (this video explains this in more detail).

Discrimination is the ability of a test (or an item) to separate the different levels. The discrimination of an item is calculated by comparing the answers of the high-scorers with the low-scorers. If more high-scorers than low-scorers answer a question correctly, then we say it has good discrimination. Discrimination is calculated and shown as a number ranging from +1.0 to -1.0. If an item has a positive number (e.g. 0.40) this shows the discrimination is good – more high-scorers than low-scorers got the answer correct. A discrimination of 0.0 means the same number of high- and low-scorers answered correctly – so the item did not discriminate between the levels. If the score is a minus number (e.g. -0.40), then more low-scorers than high-scorers answered correctly, which shows there is a problem with the item. Reliability is the ability of the test to consistently discriminate between levels.

PRE-EDITING AN AI GENERATED IELTS READING PASSAGE

I asked a test writing agent on ChatGPT to produce an IELTS reading test with multiple choice items aimed at level B2 (IELTS 5 to 6.5). It generated the test within less than 10 seconds.

There are several stages involved in producing a test, including pre-editing, editing, trialling, more editing, and finally, vetting. There may even be a need to retrial the materials. At the pre-edit stage, the writing team make an initial judgment about a task and decide whether to accept it (and the writer can then continue to work on it) or reject it because it will not work.

Click here to print off the AI generated test and items and use the following steps to put it through the pre-edit process.

1) Look at the items.

- Are there any you can answer without reading?

- Do the items and distractors make sense?

2) Read through the text.

- Is the topic appropriate?

- Is the text clear and at the appropriate level (B2 or up to IELTS 6.5)?

- Can you identify any problems?

3) Work through the items.

- Do they assess a range of reading skills?

- Are the distractors plausible?

- Is there one clear answer?

- Is the answer key correct?

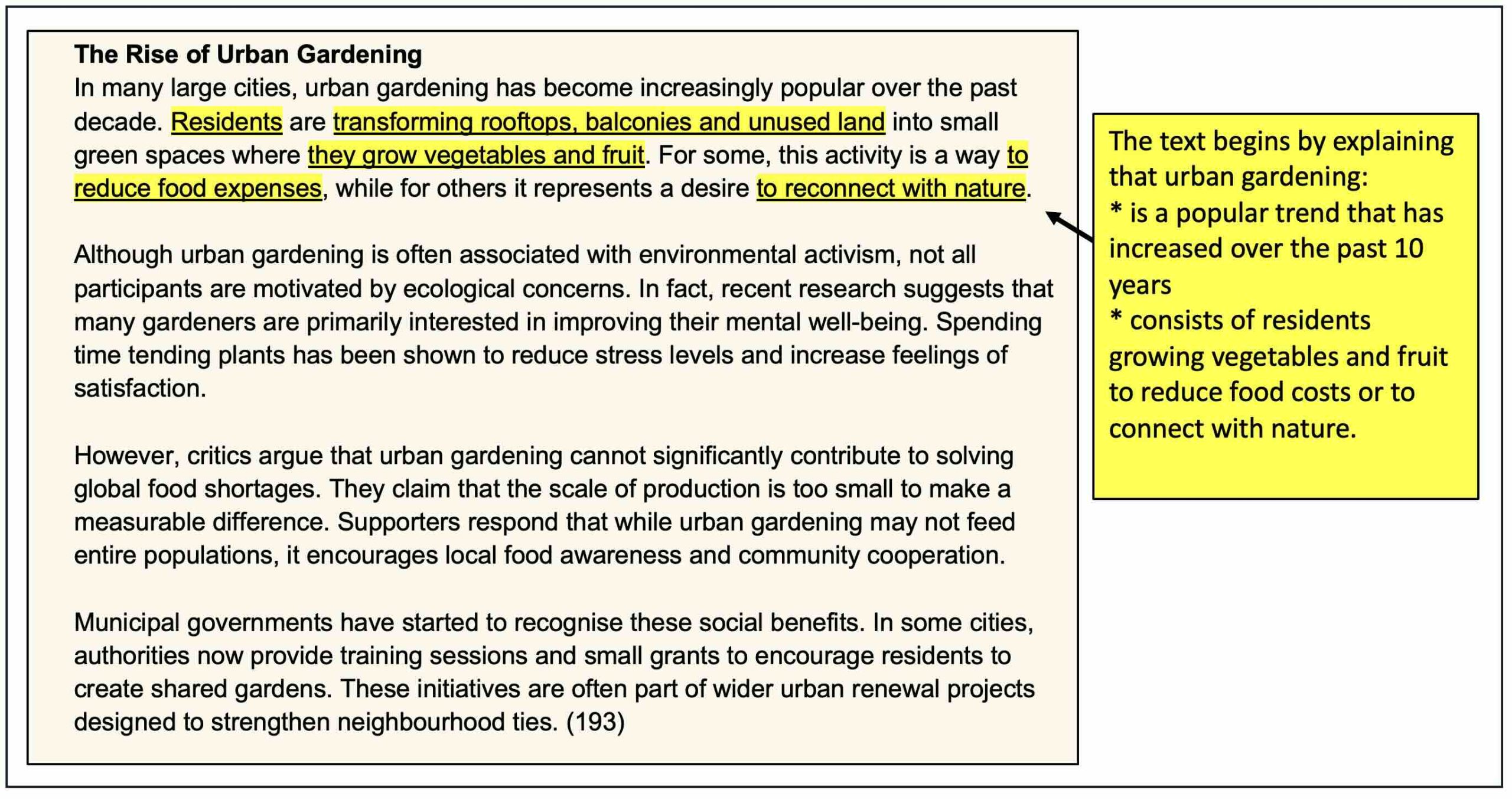

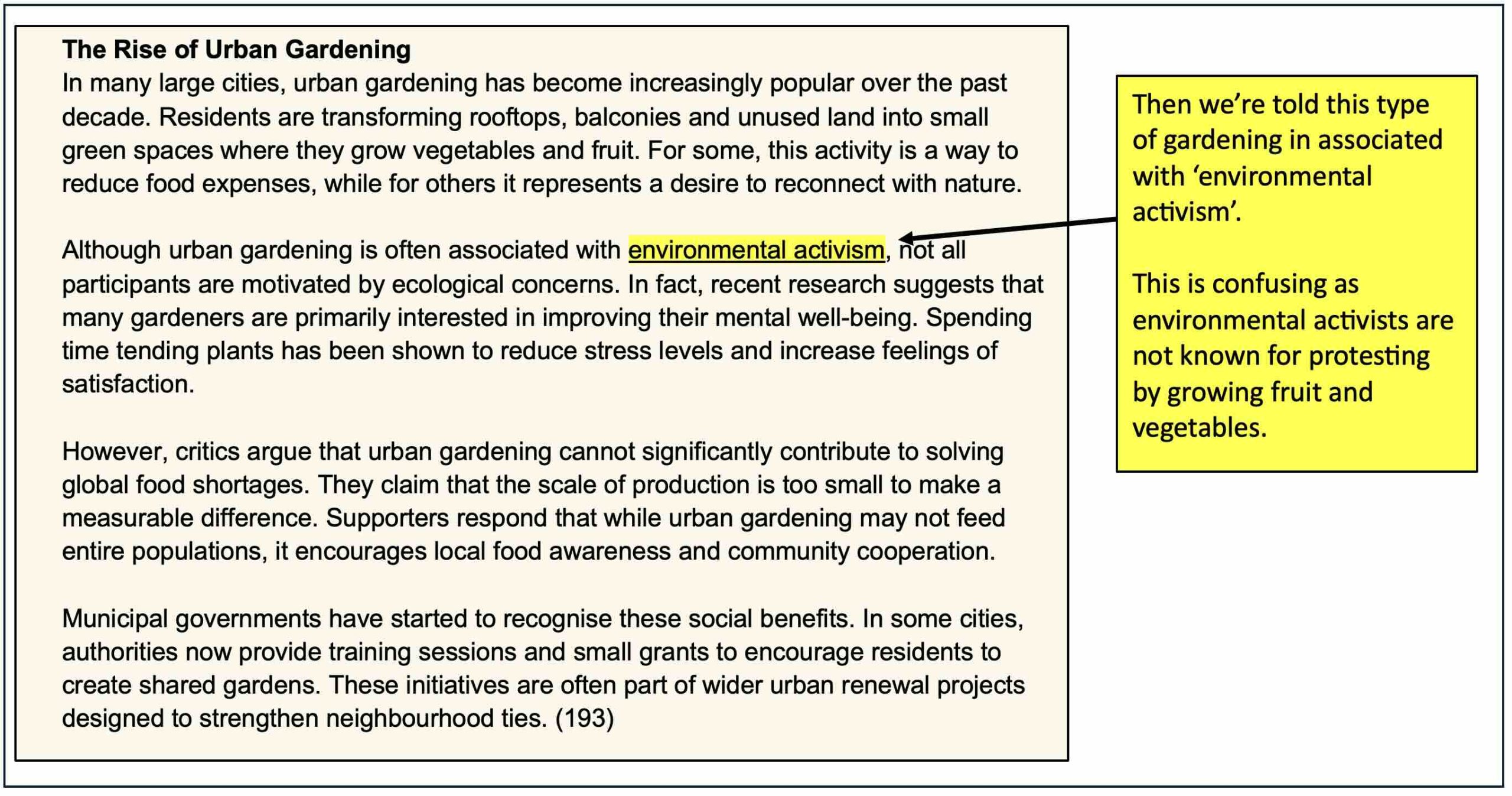

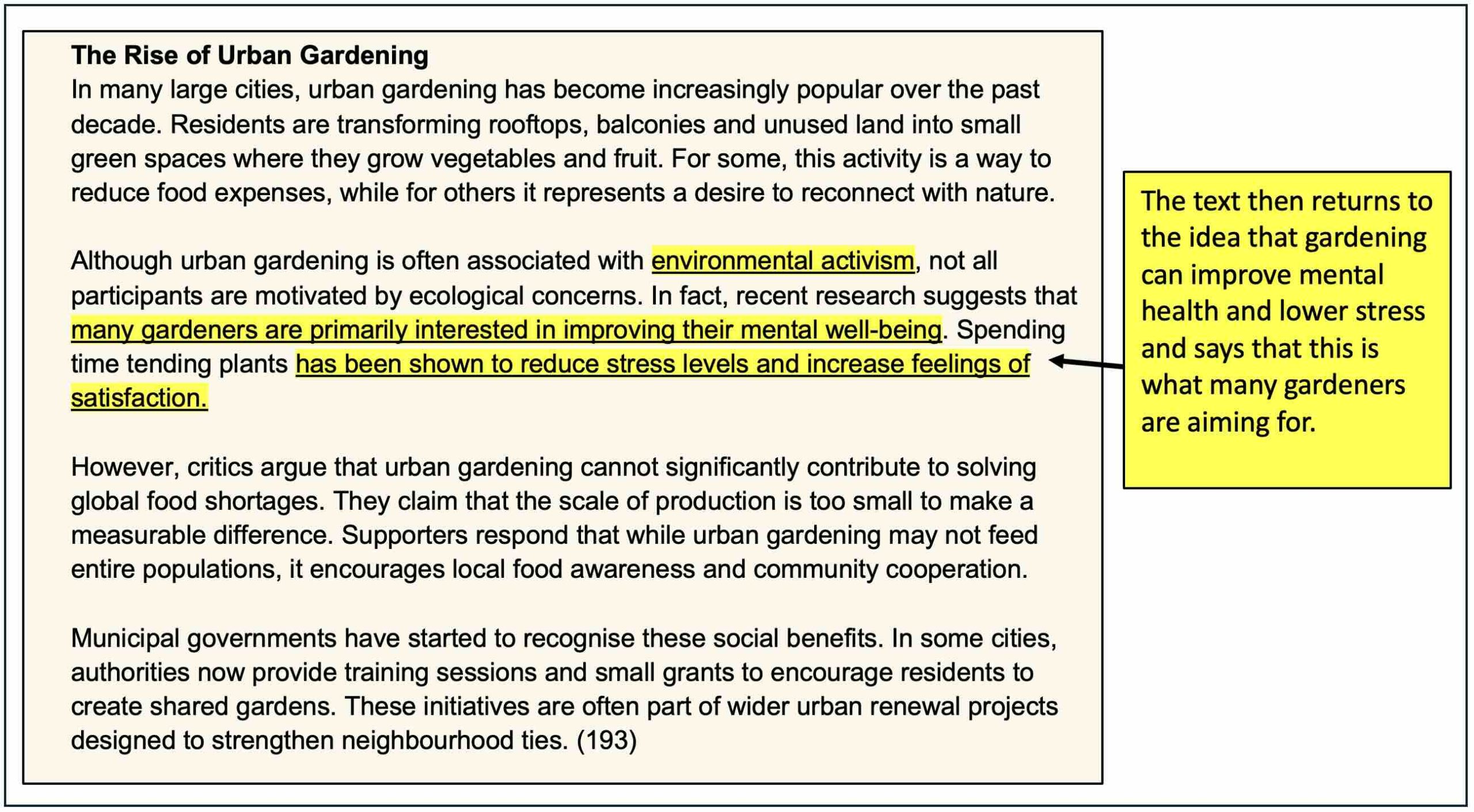

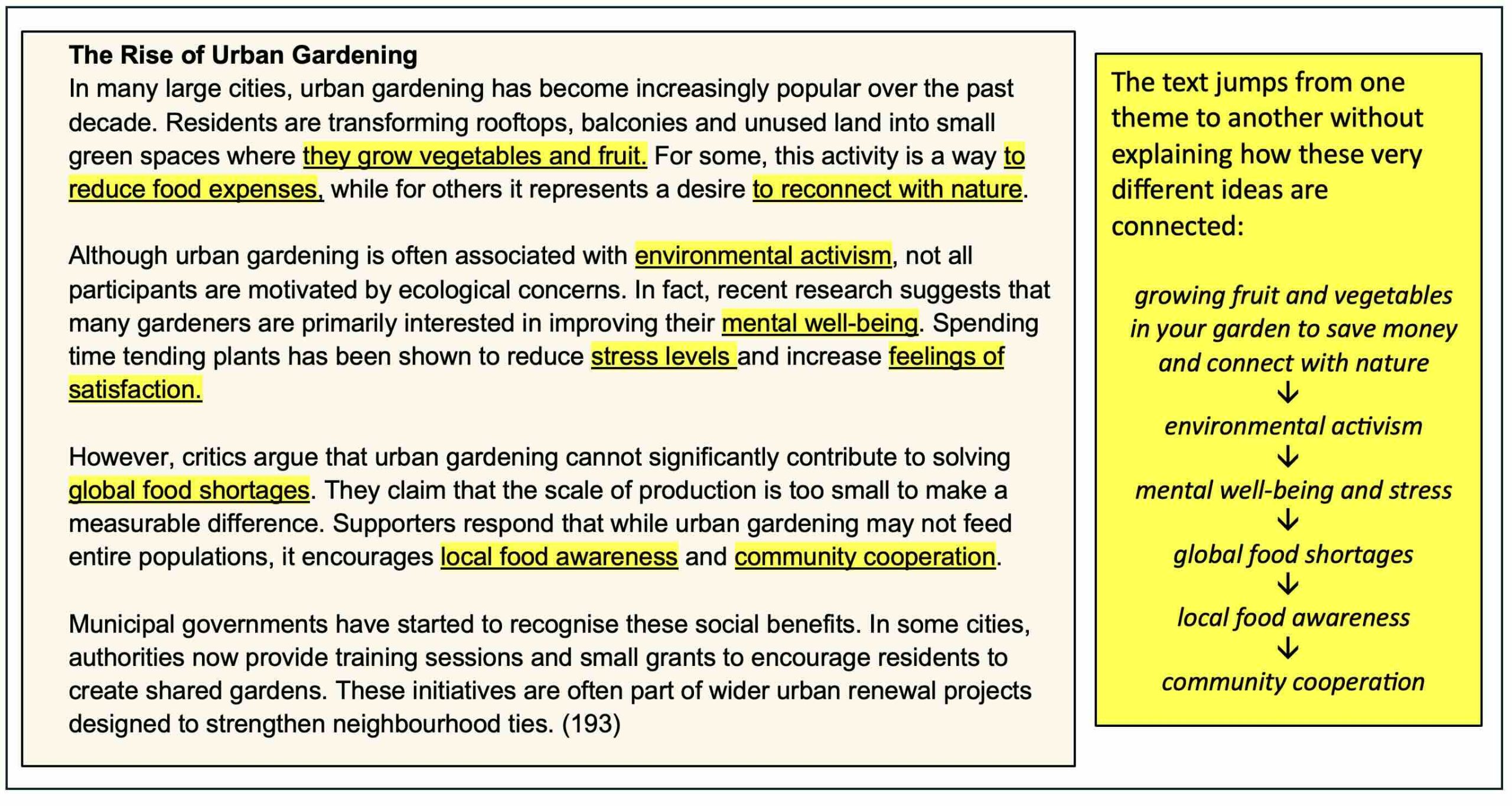

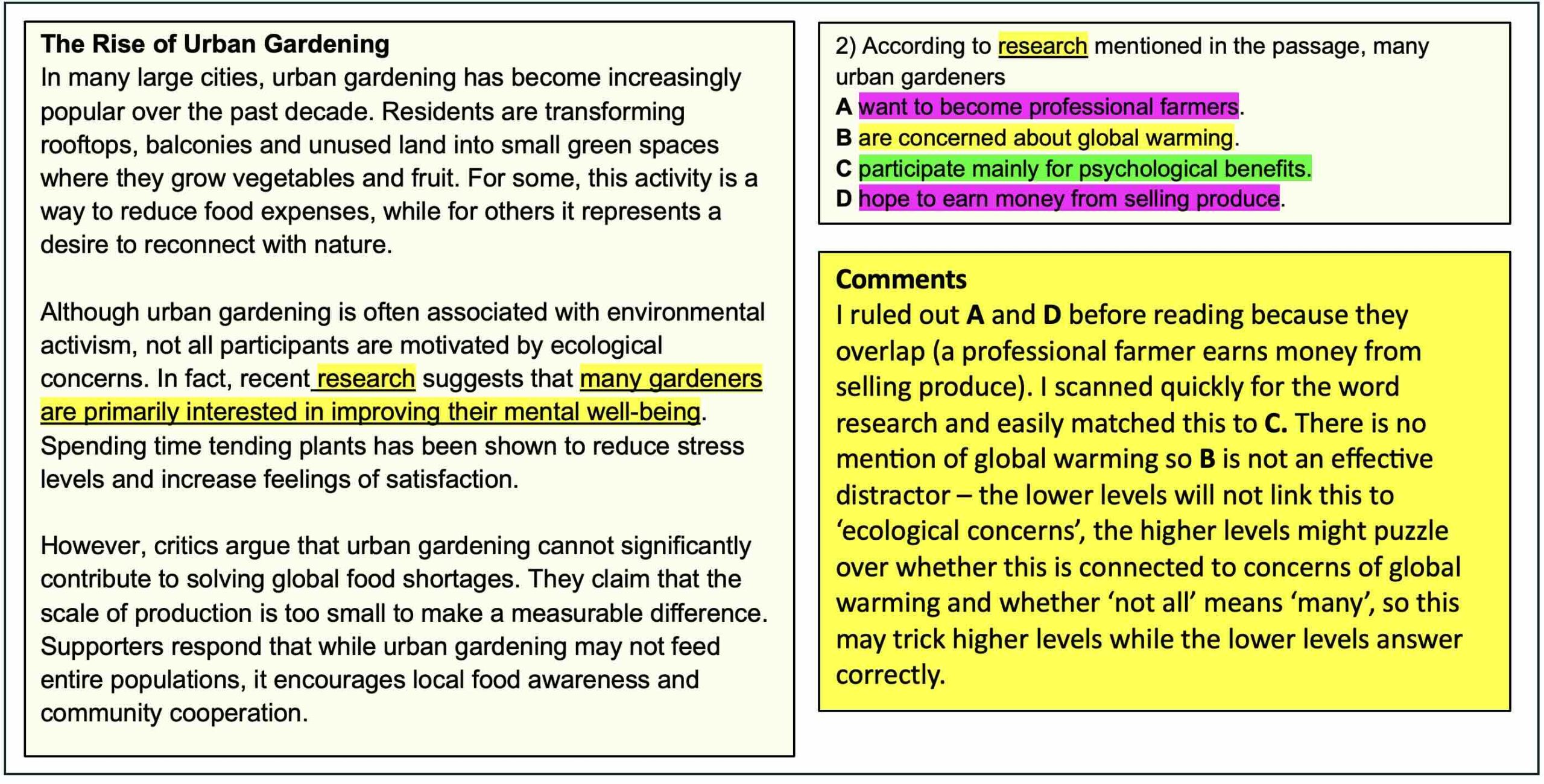

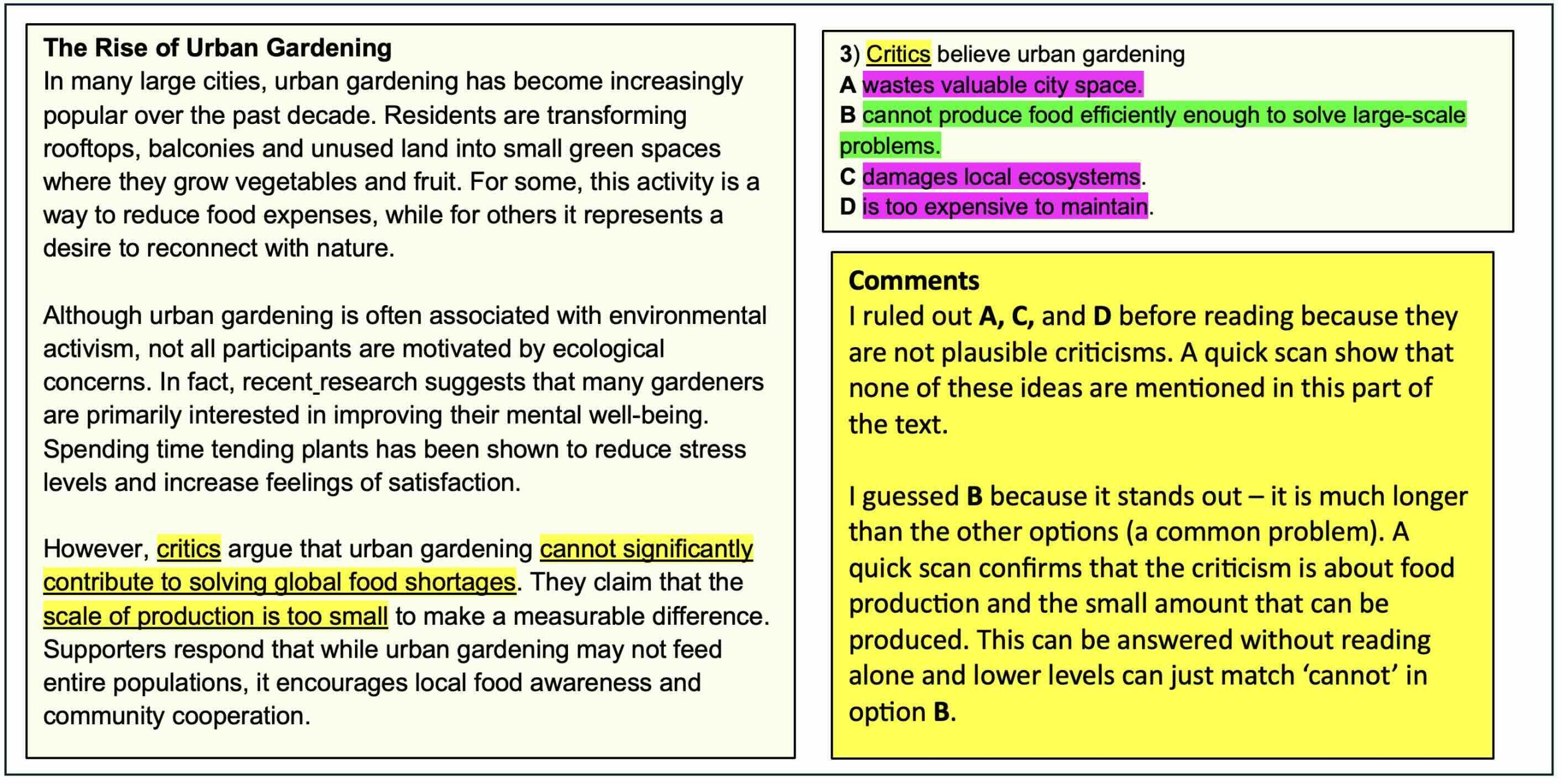

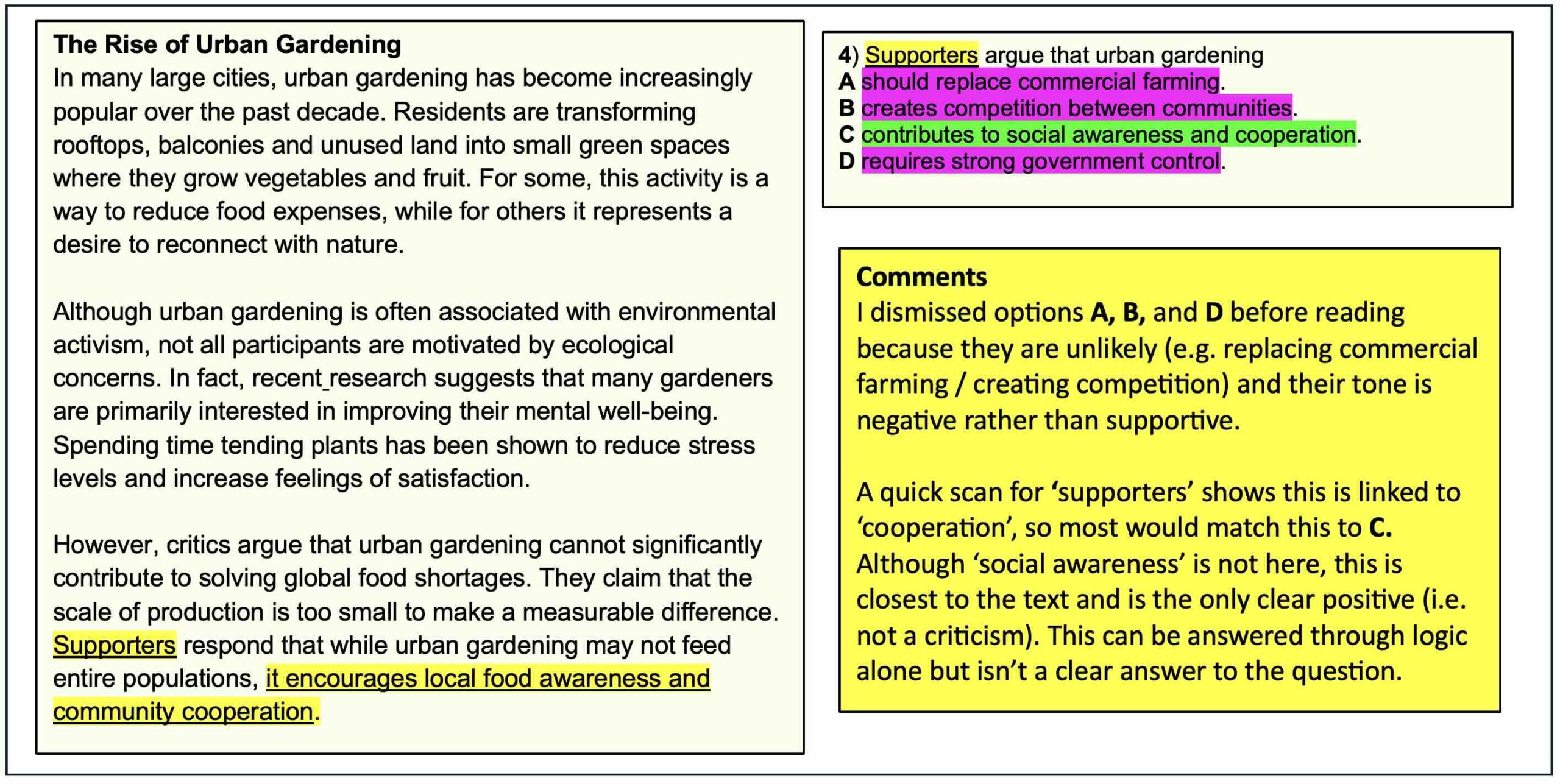

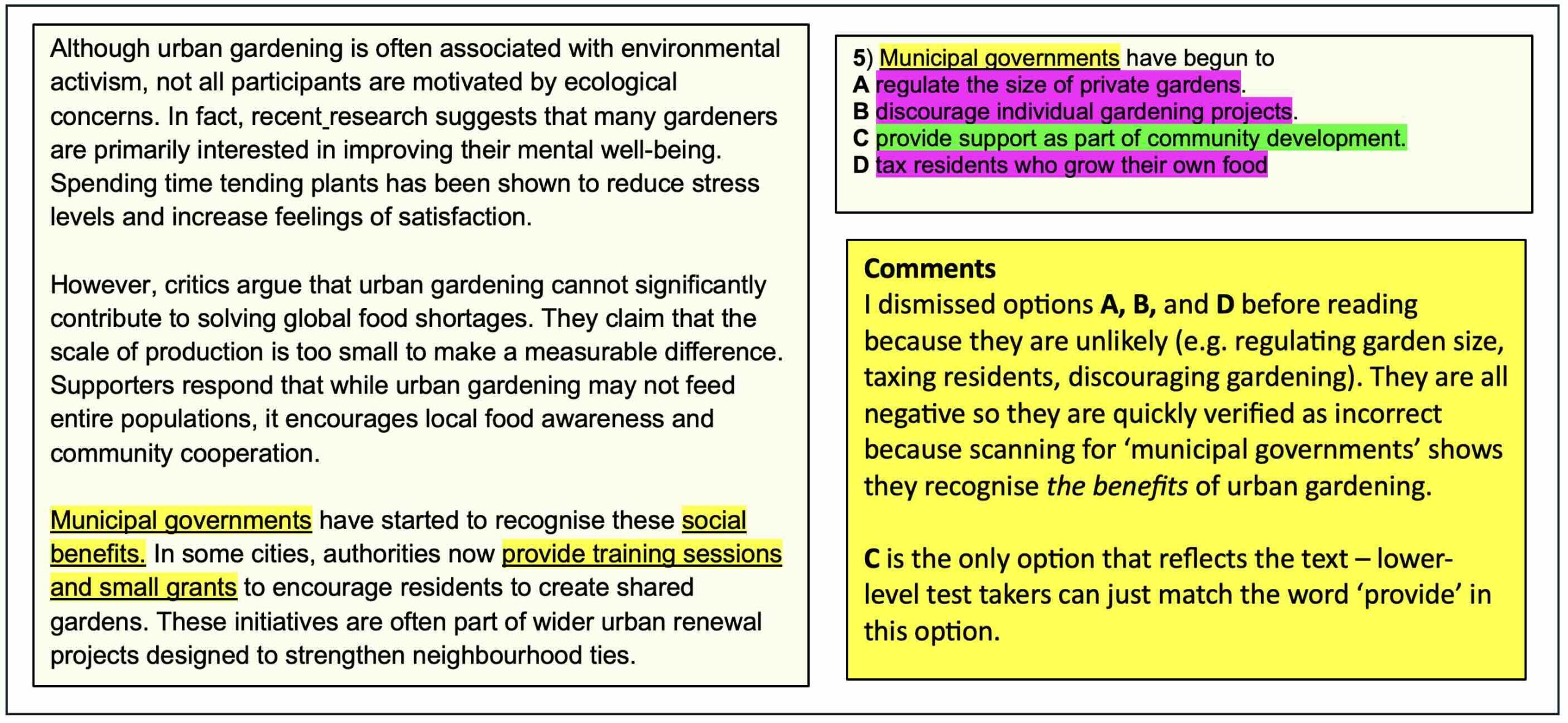

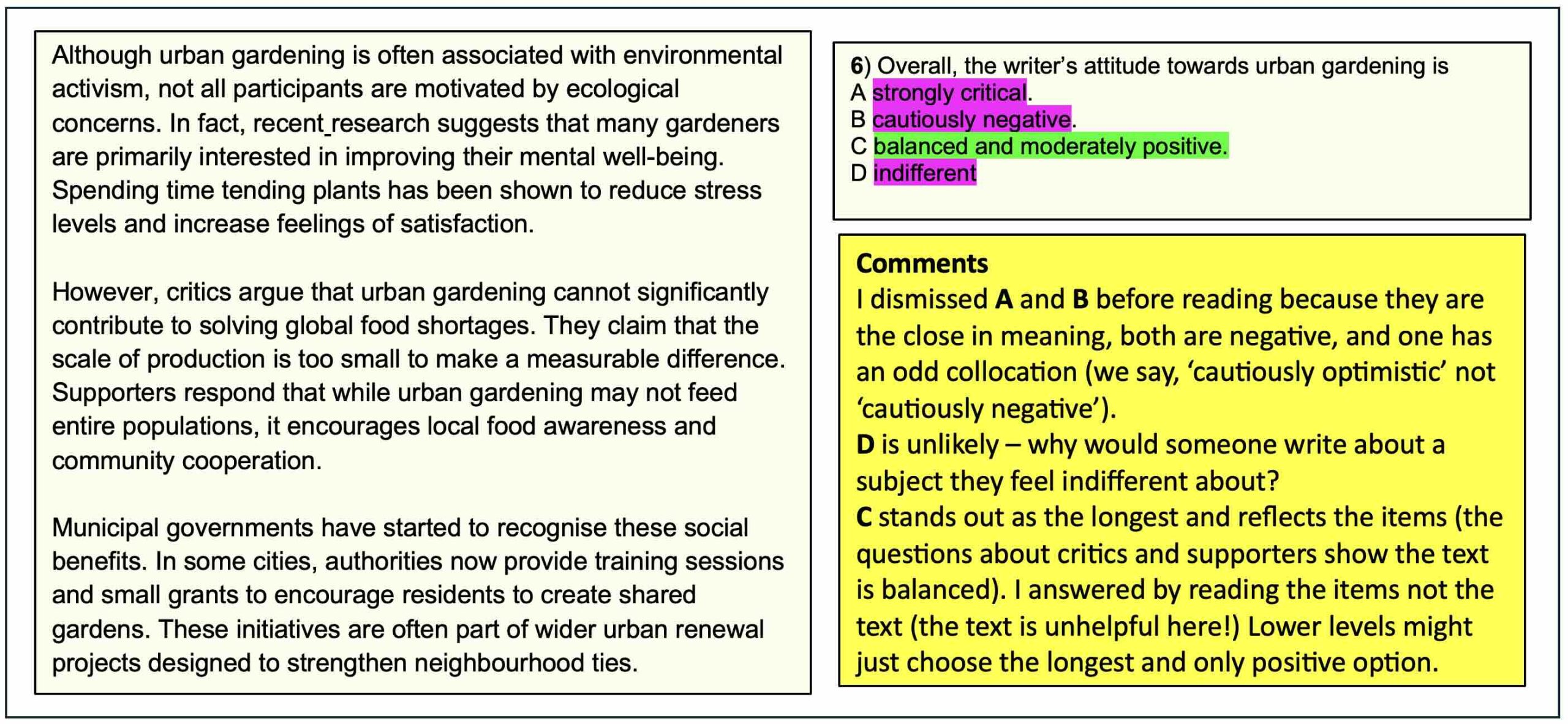

You can see my comments on the text below:

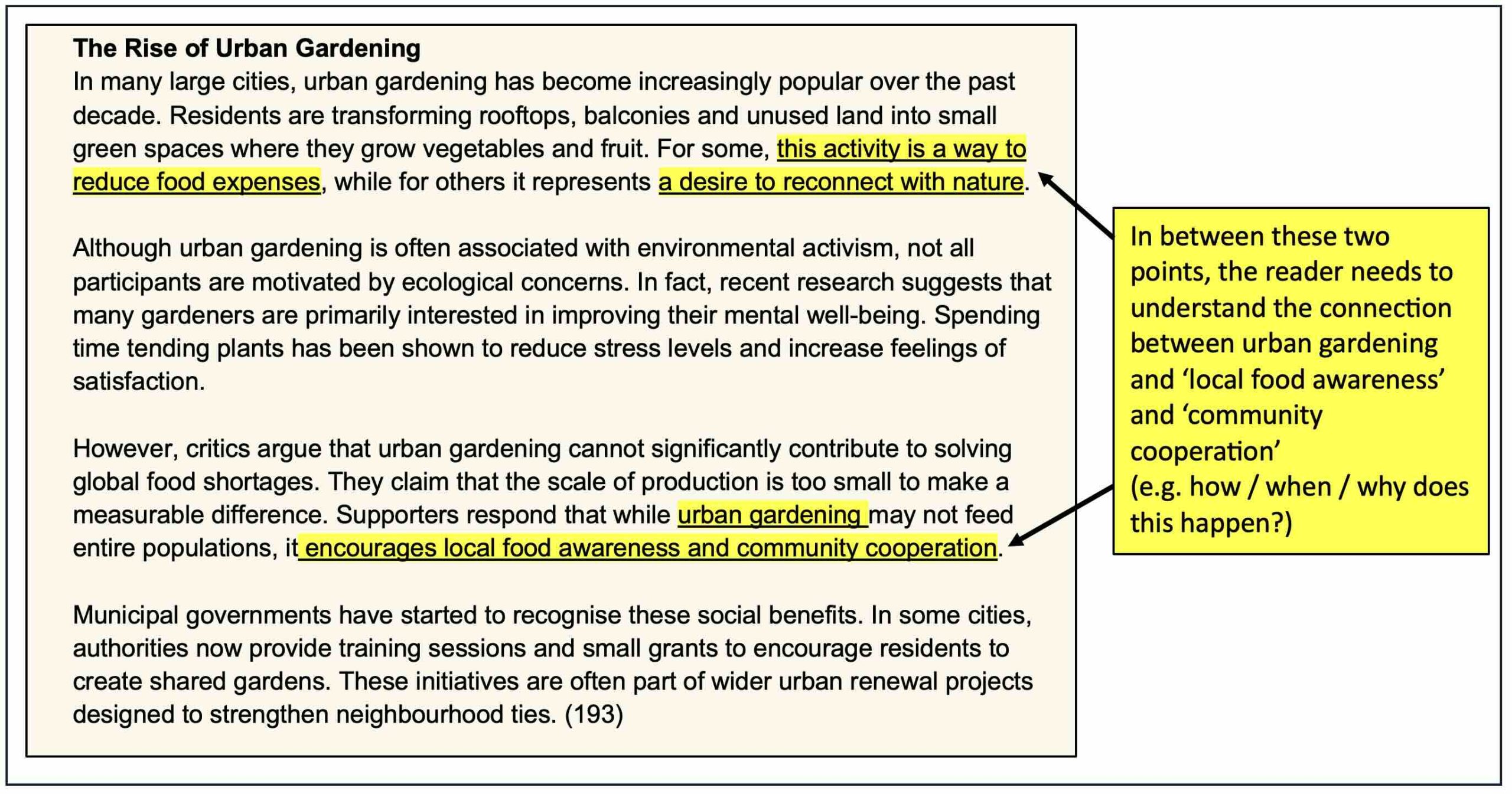

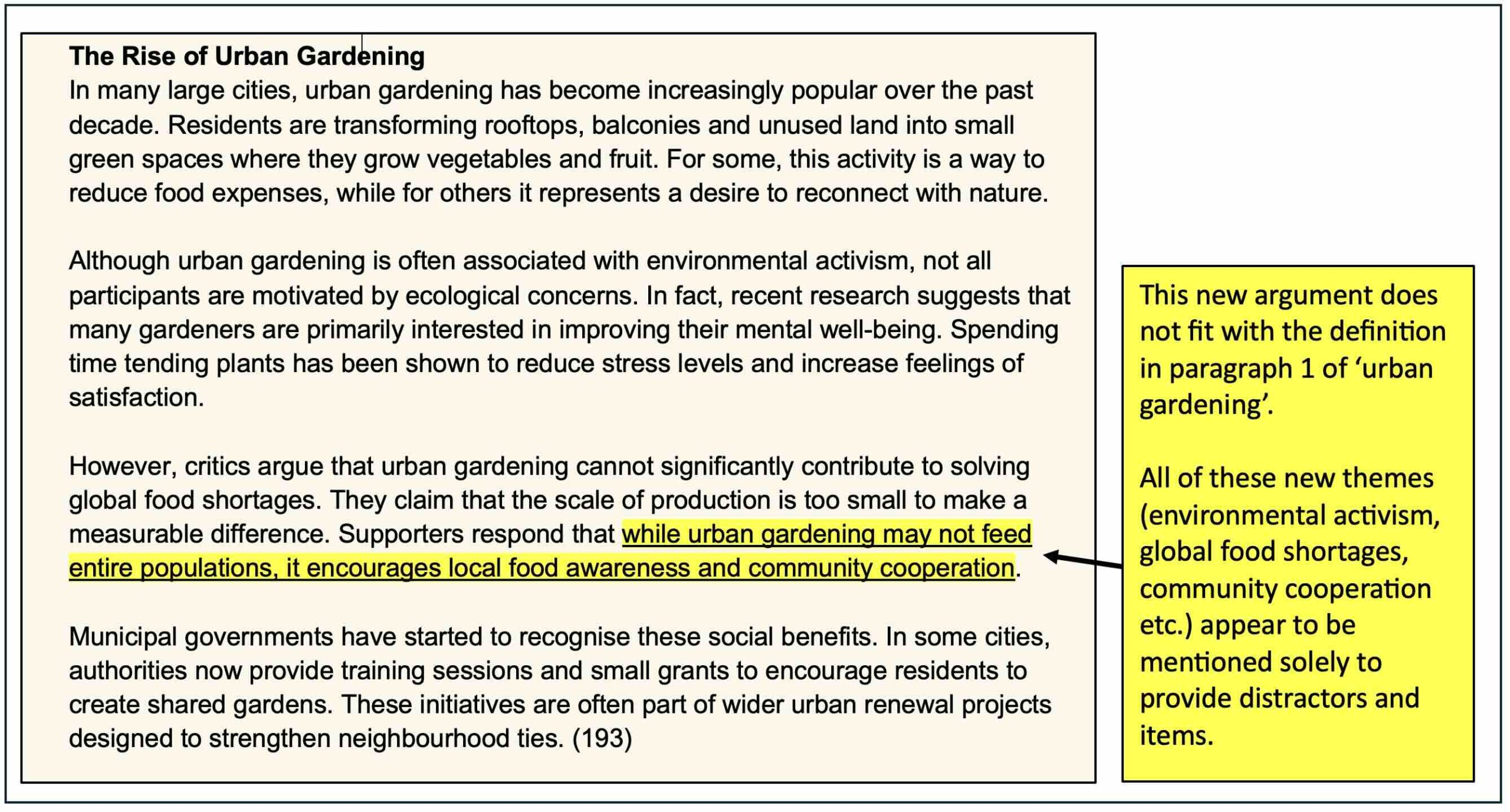

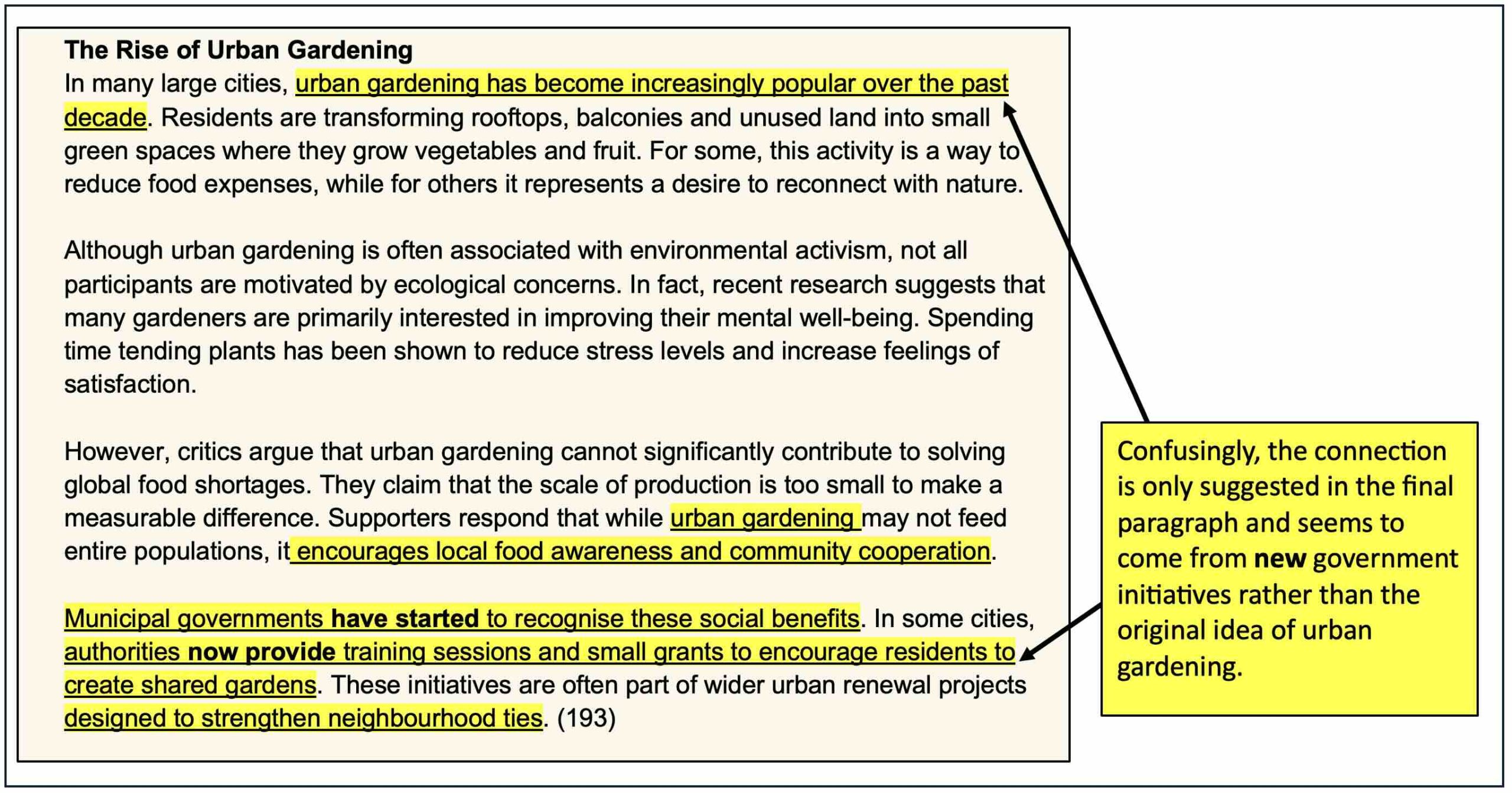

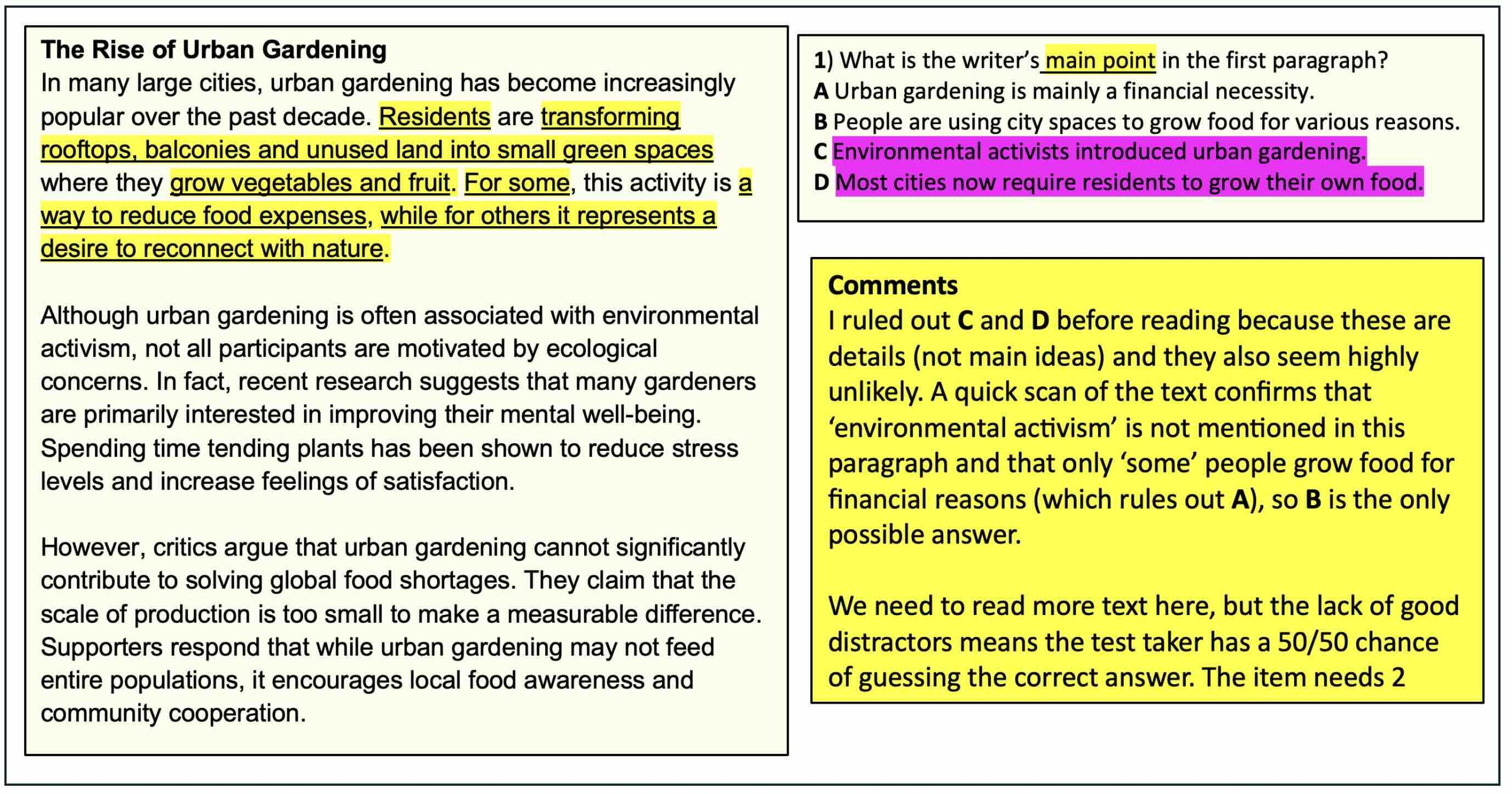

These problems in the text are important. The lack of logical connection and cohesion means that we cannot assess the test takers understanding of how ideas are connected. Instead, the focus has to be on individual sentences. This is confirmed when we look at the items and how they work.

Despite the problems in the items, the Chatbot was happy to skip any editing and pretesting and present a statistical analysis of the items that any test writer would be extremely pleased with! Such data can only be the result of trialling the test with a large enough group of mixed-level test takers (known as the cohort). When I asked about this group, ChatGPT said, ‘That’s an excellent question …The short answer is: there was no real cohort.’ For me, this cements the idea that all of this is just role play.

WHY TEST WRITING CANNOT BE AUTOMATED

AI is an attempt to automate a task. When items are automated, it is easy to find the patterns, which also reflect that beliefs and bias of the people who programmed this particular agent. For example, we can see that ‘distractors’ are created by using more extreme words than in the text (e.g. the item says ‘only’ or ‘mainly’ but the text says ‘some’), or there is no mention in the appropriate part of the text (a good sign that there is not enough information here for a multiple choice task). The correct options are often the longest, because these are the only ones that are clear and more accurately reflect the test. These problems reflect those typically found in online test materials or in books written by those who lack test writing skills. Easily identified patterns like this lead to test takers believing there are ‘tricks’ they can use to avoid reading – this is not true in well-written test materials.

Without working, plausible distractors, the test will not discriminate well between levels (some of these items would likely have a negative discrimination) and will be unreliable. A teacher may be able to work on the text to make some useful teaching materials for students at the A2-B1 level, but, as any items would probably be guessable using logic and world knowledge alone, this will not make a reliable assessment tool and would be rejected at pre-edit.

AI AS A TOOL – WHAT CAN I USE AI FOR?

The text above is a good example of what ChatGPT actually does: it collects and reassembles language connected to a topic, matching this to the language patterns it has identified. This gives us a better understanding of how AI can be used.

One teacher told me they use AI as a sort of teaching assistant to create grammar drills and vocabulary lists. This seems like a great idea and I am sure that it is more than capable of quickly creating great practice materials like this. However, the teacher also said that they use it to generate ideas, which their students then use to write an essay. The problem here is that getting ideas is a key part of writing and speaking. In my The Key to IELTS Speaking eBook, I discuss the types of memory that help in getting ideas when speaking and writing. If you use AI to generate ideas, your students are missing out on the opportunity to exercise their working memory (used to process ideas) and to practise recall, which is essential for long-term memory and learning. So, I wouldn’t recommend this.

If you want to become an expert in speaking and writing for IELTS, follow the lessons in my Key to IELTS eBooks:

I guess you’re working on a book for IELTS reading bands 7, 8, 9. If this is true, I can’t wait to purchase it.